Also known as: "what most CRO agencies won't tell you about split testing"...

There are a couple of scenarios we hear often at Scale Beyond when talking to new clients who’ve either managed CRO internally, or worked with other CRO agencies on split testing programmes:

- The “guaranteed” promised increases in performance from tests haven’t reflected in real world site performance over an extended period once the changes were pushed live.

- Despite the split testing results, the changes just don’t “feel” right – you know your customers better than anyone, and you can’t logically see a way a particular change could have had such a supposedly large impact on key metric performance for your ecommerce store.

The above are both very common scenarios in real world testing. But if you’re using world renowned split testing software (be it Convert.com, VWO, Optimizely or one of many others) used by huge multinational ecommerce brands, and that software is telling you with 99% certainty that a test version is a “winner” versus your current site, you simply can’t argue with that, can you?

Well actually, you can and in many cases, you probably should.

But here’s the kicker; many, many agencies offering CRO either don’t understand this fully, or they’re not sharing that knowledge with you in order to make their lives easier (honestly in our experience, it’s normally the former).

A real world example…

It’s probably easiest to demonstrate this with a real live testing example for you. One of our split testing clients generously allowed us to run the following test on their store whilst no other tests were running; the site runs on Shopify and has a mid-7-figure yearly turnover.

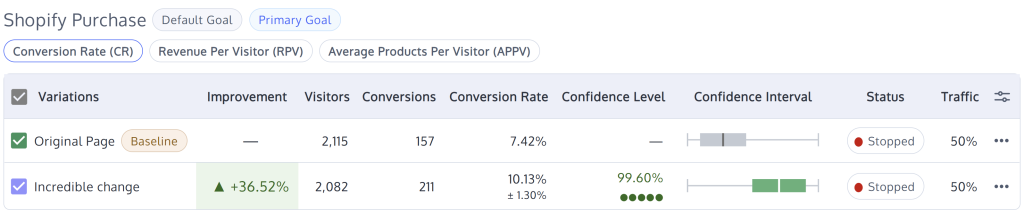

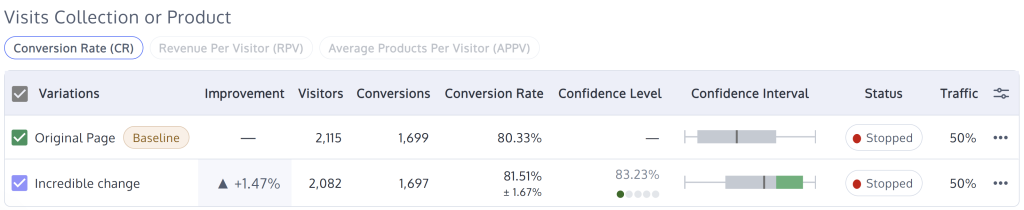

In 2025 we implemented an A/B test on the homepage of their site targeting a subset of customers, and the results were fantastic; you can see the full results below directly from Convert.com.

Not only did we see a huge increase in overall conversion:

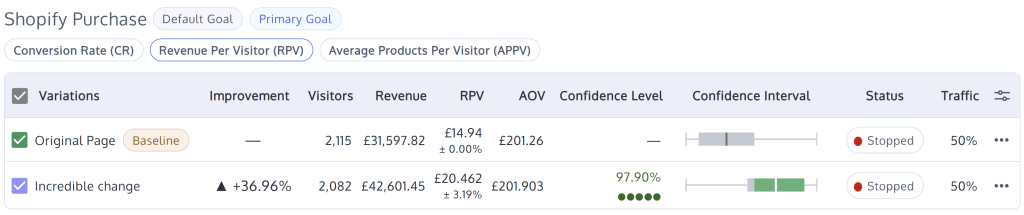

But we also saw an even bigger improvement in RPV and overall revenue recorded:

Pretty conclusive right? Based on the above, this single test in fact should add several hundred thousand pounds in additional yearly revenue for this ecommerce store (when deployed fully and shown to all users). What a result!

There’s just one teeny tiny problem though with this test I should probably share. Both the original page and B test variation were…

Exactly the same

Wait…. what?

Now if you don’t really understand ecommerce or split testing, your first reaction might be “your testing setup must be broken!” – trust me, it’s not. Nor is this about Frequentist vs Bayesian testing, one-tailed vs two-tailed test types or Sidak vs Bonferroni correction; no, this fundamentally comes down to do you understand split testing principles and how users interact with ecommerce websites, or do you not.

You might also say “well, the test is simply not valid!” if both test versions are the same, but here’s the thing. We could have changed anything minor on the homepage for the test, such as the background of an image, a little block of text, my personal favourite of changing the direction a model is looking (I see people proclaiming massive uplifts from that so often!), or something else that realistically could have no impact on overall conversion and achieved exactly the same result.

And yet, it’s exactly those kinds of tests we see CRO agencies implementing (and then proclaiming the glorious results for) all the time.

But obviously (as you can clearly see above), if you implement such a change, you’re not actually going to see any performance improvement whatsoever over an extended time period.

Why does this happen?

The “why” is quite a complex question to answer in a simple online post, but fundamentally, it all comes down to intent.

When a split test is run, all split testing software (to an extent) assumes every user seeing a test variation has some intent to complete the goal you’re testing against.

The reality of course is very different, and at Scale Beyond that’s something we know after over 20 years working in ecommerce, including thousands of hours watching customers interact with websites (including live moderated testing) and actually speaking to directly to thousands (honestly) of end users also; that’s where having people on board with extensive client-side experience is so incredibly valuable for agencies offering CVO/CRO services.

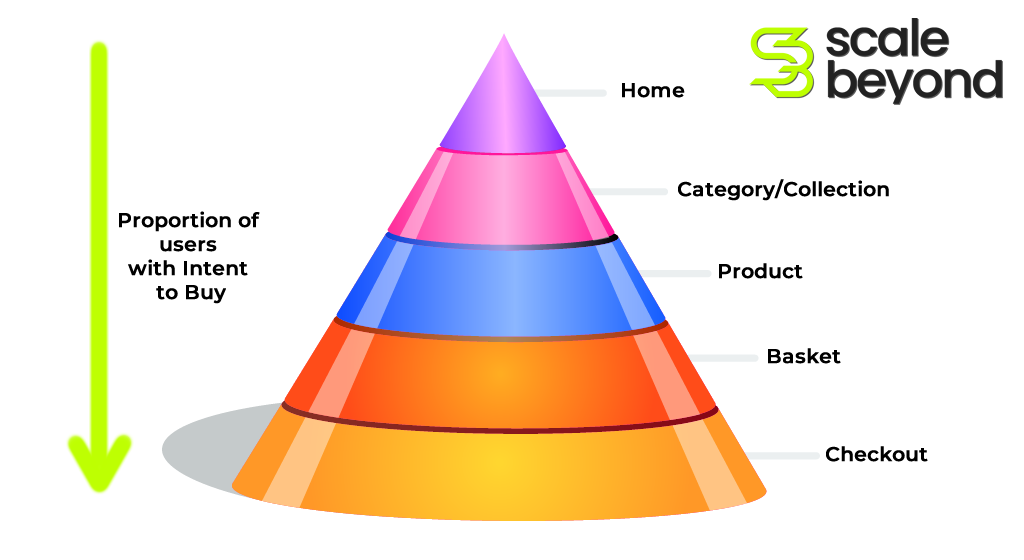

For example, even for a high performing ecommerce site, at least 80% of users landing on your homepage will likely have absolutely zero intent to buy in that current session. That means there is nothing you can do to convince them; there’s no on site change you could possibly make that would lead to an overall site purchase conversion. So whatever test version you show them, they won’t convert.

Likewise, if you have strong brand equity, you may be fortunate to have the reverse; users landing on your homepage who will absolutely buy in that session, no matter what you show them (even if they are new to the site, we typically try and target only new users for tests). As long as it’s physically possible to purchase from you, they’ll find a way, so essentially they’re not influenced by any test either – they’re going to buy regardless.

But the reality of testing is you will end up showing test variations to both of the above customer types, because there’s no real foolproof way to immediately know the purchase intent of a user when they land on your website. The only users testing actually influences in ecommerce are what we call “possibles” (users who aren’t likely to buy but have some intent) and “probables” (users who have strong intent to buy but it’s not definite they will).

And that’s where really understanding ecommerce, user behaviour, and thinking before you test, is so important for any agency partner you work with on testing.

So…. what’s the solution?

In short, the easiest solution for you is to work with an agency who really understands ecommerce, split testing and how users interact with websites – we happen to know a very good one 🙂 Please feel free to get in touch for a no obligation conversation about what we can offer.

But to offer a bit more explanation on how to mitigate these risks, one of the core principles of how we operate testing is to focus on micro rather than macro conversions. An easy example is homepage tests; the primary goal of a homepage test should always be to get users to the next stage of the journey (i.e. to get them off the homepage) – that’s normally to a category/collection page, or directly to a product page.

The idea that most users can even remember what they saw on the homepage after they’ve gone through all the stages of browsing for products, selecting a product, adding to basket, going to checkout, entering their billing information, entering their credit card information, and finally placing an order, is of course, completely nonsensical when you actually think about it. Yet consistently we see ecommerce business and CRO agencies test based on that logic.

The actual primary goal for the above test should have been getting to a Shopify collection or product page, and you can see from the results below that the result here was far less pronounced (which makes a huge amount of sense when you understand how users interact with websites):

We do of course measure all tests against overall conversion and revenue too (it’s always interesting to see) but the primary goal assigned to a test is so important. When looking at overall conversion and revenue, intent to buy has a massive impact on testing.

We purposely picked a homepage test above as it’s the easiest way to demonstrate this, since the further a user progresses through your site, the higher their intent to buy is likely to be, and therefore the less likely it is you’ll see a false positive when measuring against overall site conversion and revenue.

Another fundamental step we take for all tests is to run them as A/B/A tests – whilst it’s still absolutely possible to generate a false positive in this way (and from a purely statistical level, more variations means a slight increase in the chance of that being the case), we know from thousands of real world tests that the practical reality of testing in this way means it’s far less likely to draw the wrong conclusion from a test.

The downside of this approach (and the reason most CRO agencies don’t do this) is that it’s much harder work for us to prove a change is right versus just throwing up tests like the above and waiting for a “proven” winner to emerge.

However at Scale Beyond, everything we do around split testing is built to deliver transparency for our clients and ensure repeatable results; if you don’t take that approach, there’s really no point at all in even running split testing on your ecommerce site.

If the above resonates with you, please do get in touch for a no obligation conversation about what we can offer – we work with a range of clients from huge multinationals to much smaller businesses, and despite our level or experience and expertise, you may well be pleasantly surprised by how affordable the service we offer is.

Any final tips for selecting a CVO/CRO split testing agency?

We know Scale Beyond won’t be the right agency partner for every client, and not every client is the right partner for Scale Beyond. We’re also sure there are many agencies out there delivering CVO/CRO services who may be a better fit for you (and there are lots of CRO agencies we admire who are doing great work).

But just to conclude this post, we’ve collated a few top tips to help you select the right CVO/CRO split testing agency for you:

- Be careful if a CRO agency only works with one platform, for example Shopify. We’re big Shopify fans (and many of our clients use this) but there are many verticals (such as complex B2B operations) where Shopify is not the right platform. The knowledge a CVO/CRO agency will gain from working with complex ordering requirements on different platforms (over a longer time period than Shopify has been established) is invaluable in shaping their overall knowledge and understanding of CRO and testing. Naturally at Scale Beyond, we’re completely platform agnostic and love driving results even on complex sites (our team even ran tests on ancient platforms like OsCommerce back in the day).

- Don’t be mislead by AI audits; the “AI” is always just working through a predetermined list of check points, not applying any real world knowledge and experience. Whilst you may find some value from this if you’re just starting out, it’s of limited use to established ecommerce brands.

- Don’t be afraid to look up official company information for a business before engaging them. The agency market is saturated and sadly many agencies do fall into liquidation; the last thing you want is to invest your time and energy into building a relationship, only for that agency to disappear (or relaunch as a phoenix after liquidation, leaving creditors unpaid, which is also common). Any legitimate agency will readily show their registered company details online, which you can then check to ensure their financial stability.

- And finally, make sure your agency is focusing on the correct metrics when testing and not just revenue for every test (as you can hopefully see above!).